ngl, die Besessenheit, LLMs einfach größer zu machen und zu hoffen, dass sie aufhören, uns anzulügen, wird alt. Es fühlt sich an, als hätten wir die Grenze dessen erreicht, was "schicke Autovervollständigung" was man tatsächlich für die Gesellschaft tun kann. Man kann zum Beispiel kein Stromnetz betreiben oder einen Mikroprozessor für ein Modell entwerfen, das sich zu Halluzinationen entschließen könnte, nur weil die Aufforderung seltsam formuliert ist

Ich habe mir die Rednerliste und die Podiumsnotizen für das angesehen Milken-Konferenz und es ist ziemlich aufschlussreich, wen sie dieses Jahr auf der Bühne haben. Ich sehe, wie sich die Leute von ASML und Google mit Logical Intelligence zusammensetzen, um darüber zu reden "deterministisch" Durch die KI entsteht das Gefühl, als würde der Dreh- und Angelpunkt endlich im Hintergrund stattfinden

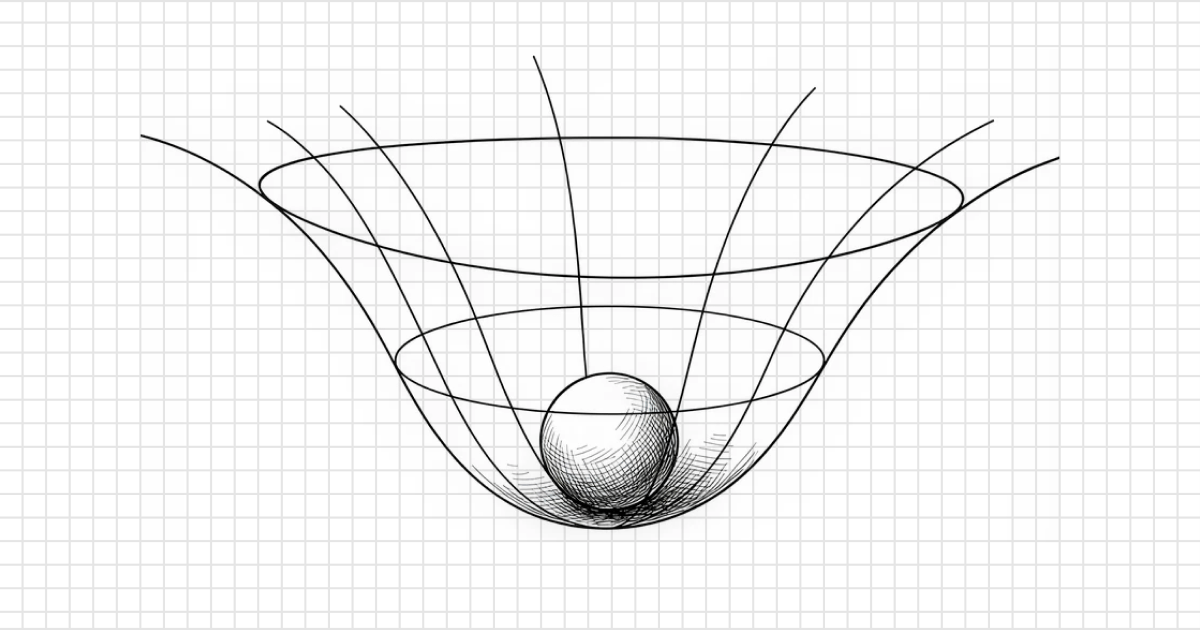

Die Zukunft ist nicht nur ein intelligenterer Chatbot. Es geht um diese energiebasierten Modelle, die tatsächlich Einschränkungen und mathematische Logik verstehen. Die Branche zieht endlich ab "KI zum Spaß" Zu "KI für Dinge, die buchstäblich nicht scheitern können" Ein bisschen wie ein Realitätscheck für den Silicon-Valley-Hype-Zyklus, aber ehrlich gesagt ist es eine Erleichterung zu sehen, dass man sich ausnahmsweise einmal auf die Richtigkeit konzentriert

why I think the "chatgpt era" of AI is already hitting a wall

byu/GodBlessIraq inFuturology

26 Kommentare

The current AI trajectory is shifting from probabilistic Large Language Models (LLMs) to deterministic, energy-based reasoning architectures. This post explores how major institutional players at the 2026 Milken Institute Global Conference are prioritizing „correctness“ over generative „vibes“ suggesting a future where AI is integrated into mission-critical infrastructure with mathematical certainty rather than just next-token prediction.

I think just like the dot com bubble era, there are too many companies in the same space. Eventually, some of them need to fall for the strongest to survive. Open AI was first out of the gate, but we see it’s now faltering a bit to Anthropic & Google.

Don’t just look at the large language models, these massive data centers have the ability to train all kinds of neural networks to do all kinds of different things.

Note we’ve long had the other type of AI, deterministic reasoning models – it was LLMs that were novel. The self driving cars are a good example of pre-LLM AI and they work very well. The strong chess algorithms are another example that have been around for a long time. I don’t really think it’s appropriate to call either AI. They aren’t really emulating intelligence in any way. The holy grail is AGI where a computer is actually emulating intelligence not just doing fancy probabilistic pattern matching or deterministic algorithms.

Hit a wall a while ago. The progress we’ve been seeing recently is more in applying the tech rather than the tech improving.

Meanwhile we’re starting to see signs of model collapse in GPT so things might actually get worse.

Yeah I think determinism will win in the end. You can’t just leave things to chance and probabilities.

You’d want answers to be consistent 100% of the time, and not “well it could be this or that” and hope that the answer will be correct.

Why are anything right or wrong? Because we have theories, beliefs (which are a kind of theories), and they determine within those frameworks whether something is right or wrong. If something is wrong, then it’s because the theory is wrong.

It has nothing to do with chatbots probabilistically determining the answer is A instead of B. Chatbots don’t come up with theories.

No one ever claimed that hallucinations would disappear with scaling. Hallucinations are part of the architecture. They won’t go until we have new arch that isn’t generative. Gen ai is a stepping stone, not the final boss.

For a future focused sub the posters here seem to be very poorly informed on what the majority of companies are actually doing with AI and how they are using it.

Where might I go to read more about “deterministic AI” and/or “energy-based models?”

There is no “understanding” in LLMs, that would infer some kind of sentient awareness. These are statical machines, they were designed to be convincing to trick ppl into believing they are aware. It’s a bit like recording all combinations to an answer on a recorder then playing it back to someone when they ask it. This is Plato’s Cave and Jean’s simulacra and simulation. And the more modern Frankfurt on bullshit:

“The liar cares about the truth and attempts to hide it; the bullshitter doesn’t care whether what they say is true or false”

LLM’s seem to be actually getting worse, perhaps because I’m getting better at detecting its lies or incongruencies

Yeah…. within the scope of the commonly talked about LLM models, but it’s always good to keep in mind that the vast majority of AI is going to be narrow scope, AI models that you’ve never heard of and a lot of of those are accurate and energy, efficient and much much faster than anything. LLM can do.

Those are the purpose built AI that aren’t trying to ever become AGI and generally, they do their jobs a lot better.

So one solution is just to stop trying to start from the top of complexity and start from the bottom and work up. The stock pumping ability of selling people to dream of AGI makes a lot more money than any actual AI model can. That seems to be the real dilemma here.

When it comes to achieving AGI, they have no idea what they’re doing or what approach is gonna work. It’s probably gonna wind up being some combination of approaches on top of that. It might not even be that useful because you already have 8 billion humans on the planet that effectively are already AI, but do so with way less energy input..

What you don’t have is a ton of cheap labor. It’s really the robotic labor coupled with AI. That’s good enough to run the robotic labor that’s gonna make the big difference, not the big data center is trying to be AGI that we don’t really need.

The entire history of AI since the 60’s has been „if only we had more processing / storage / nodes / connections / money / etc. then I’m sure that THIS time the magical statistical box will suddenly become intelligent through some form of critical mass via a mechanism that has never been witnessed, hypothesised or proven, ever.“

Every time, it just plateaus that little bit higher while consuming exponentially more resources.

And you know what that means? When it takes exponentially more resources to get a logarithmic improvement? It means it ain’t ever gonna happen. Certainly not when we can’t even describe any kind of method where that would occur.

As far back as the origins of the entire area of study, through neural networks and genetic algorithms and so on… it’s been the same nonsense based on the same flawed premise. That „intelligence“ is just a matter of accumulating a critical mass of dumb systems. It’s never been true.

And now we have pumped a vast portion of worldwide GDP into it, caused a glut of datacentres, full of millions of specialist AI processors, causing worldwide shortages, trained on the entire digital history of the human race, and you know what… it still hasn’t happened.

One day, AI people will get off their arses and actually think about the problem they’re trying to solve, instead of just blatting out another identical PhD paper to all those before it, but with a slight twist, and then fleeing as soon as the inevitable plateau in their models starts to show.

Until then, apparently, we’re just gonna piss away the world’s resources (computing and otherwise) on another thing that has „funny“ conversations but can still never infer, innovate or intuit… just apply statistics in a brute force manner of all automation that came before it.

/tinfoil hat on

I don’t think the point of LLM’s was for making anything useful, I think the point was to test run public perception, fine tune models, and gather training data for whatever is next. When something is free, you’re the product, and LLM’s have predominantly been free to use.

Of course, this is merely a gut feeling of mine and not actual evidence. And I don’t know what’s next, either.

/tinfoil hat off

Deterministic AI is reinventing programming languages

A lot of companies have figured out how to keep it constrainted. And you can see the difference in output produced by it. But in the end making machine learning algorithms 100% is not possible. 80-90% range is the max they can do.

OCR is really old and well done machine learning algorithm. It still has 80% accuracy in the real world.

The wall is compute efficiency. Sam Altman promised an improvement in tokens/compute, but the development has been mostly linear. Cost is going to ground expectations and reality in the next couple of years. This wont be a tool everyone can afford.

Google was always going to be the “winner” in all of this. They have the infrastructure already in place and have been working on building it for two decades.

It does seem that with LLMs the current situation is throwing more hardware to make bigger/faster/better models.

But it does seem that the models are much the same. Yes, more capable at what they’re designed to do, but not really anything different. Yes, there’s advances in making stuff more efficient etc.

For sure, one of the issues is people just using it inefficiently. Like for example people doing basic manipulation of a csv file that could be done with a single line of awk, sed and grep. Or asking what the weather is when you can just go to the website that shows it in more detail. But I guess when you have a hammer, everything looks like a nail.

LLMs have been game changing, but I think more advanced AI is going to be much different.

Yes, they do things extremely fast compared to humans, but also often they are not as good as humans.

For me the big issue is the sheer amount of data that they need. If you compare to humans for example, a 10year old hasn’t read the entirety of human literature to be able to reason and hold a conversation. Or a programmer doesn’t need to read every single open source project to be able to code.

Personally I think the big breakthrough will be in reduction of necessary training data, self learning (without re training) and some kind of actual reasoning. For example instead of training it on billions of lines of code, train it on just the language specification and with the reasoning it can actually code, rather than how it is currently.

The other glaring issue is cost. Right now everyone’s AI usage is heavily subsidized. What happens when actual costs are passed to consumers? Will they just say fuck it, not worth it? Will businesses say the same (I’m not talking about he big corporates that have money to burn, but the masses of small and medium businesses who can’t afford shitloads in AI subscriptions).

>it feels like we’ve reached the limit of what „fancy autocomplete“ can actually do

This couldn’t be farther from the truth. Your entire post is just „ignore all the evidence and data and just pretend it’s the exact opposite.“

We can have hallucination free inference it just costs 3x as much. Just like how auto-pilot works where a signal comes in and three different computers verify it, 2 out of 3 votes check if it’s a false signal. It’s a thing many of them do, running an inference and then running the check and then double checking if it doesn’t match expected output.

The machines that keep lying to you are just cheap. The hallucinations are how they work but their errors aren’t world ending.

The „next gen“ ones just have tons of redundancy and human oversight. It’s just way faster with a smaller risk paired with a brand new skill set. Just like how a practiced pilot knows why an autopilot could interpret humidity in the sensors as turbulance or whatever we’re going to see that be the default for most knowledge work.

this is like saying it’s finally winter after autumn. guys. autumn has ended. the last leaf has fallen. it’s finally happening, all in the background. winter is coming 🐺🦌🗡️🐉

I’m confident the technology will plateau, not there yet. Not because of LLM capability expansion, but more so the accompanying technologies. We’re about to see an explosion of vulnerabilities due to AI detection, but will level off as it gets integrated into SDLC. We’re going to see tons of agentic use cases, most will fail but some will survive. Localized LLM use cases due to data sovereignty concerns, etc.

A lot of specialists have been saying this for a while now. That’s why Yann Le Cun left Meta, because he wants to concentrate on world models. There are all sorts of other types of AI being researched. LLMs just happen to have the hype at the moment.

The transition to ai systems is what will push things forward. An llm with bad tools and no context is dumb. An llm with a treasure trove of accessible mcp tools, a KG, and a large enough context window is insanely effective, imho. The systems are what need to really catch up and are what most in my industry are focusing on now.

I’ve been seeing significant improvements, especially regarding coding intelligence. Nothing that makes me think we’re hitting a wall