Jede große KI-Figur hat ein anderes Bild von AGI. Musk sieht exponentielle Kurven. Hassabis sieht einen Weg durch Weltmodelle. LeCun hält den gesamten LLM-Fokus für eine Sackgasse. Die interessante Entwicklung besteht darin, dass LeCun seinen Worten Taten folgen lässt. Er ist Vorsitzender der Abteilung Logical Intelligence, die baut Energiebasierte Modelle. Ihre Prämisse: Bei Intelligenz geht es nicht darum, das nächste Wort zu erraten, sondern nach Lösungen zu suchen, die Einschränkungen erfüllen. Ihre Sudoku-Demo zeigt, wie Kona Rätsel in Sekundenschnelle löst, da es eine Zelle nach der anderen optimieren und nicht vorhersagen kann.

Aber selbst wenn EBMs für eingeschränktes Denken funktionieren, führt uns das dann zu AGI? Der Gründer sagt, dass AGI ein sein wird "Ökosystem" kompatibler Modelle. Das ist ein viel chaotischeres Bild als "Skalieren Sie LLMs, bis etwas Klick macht".

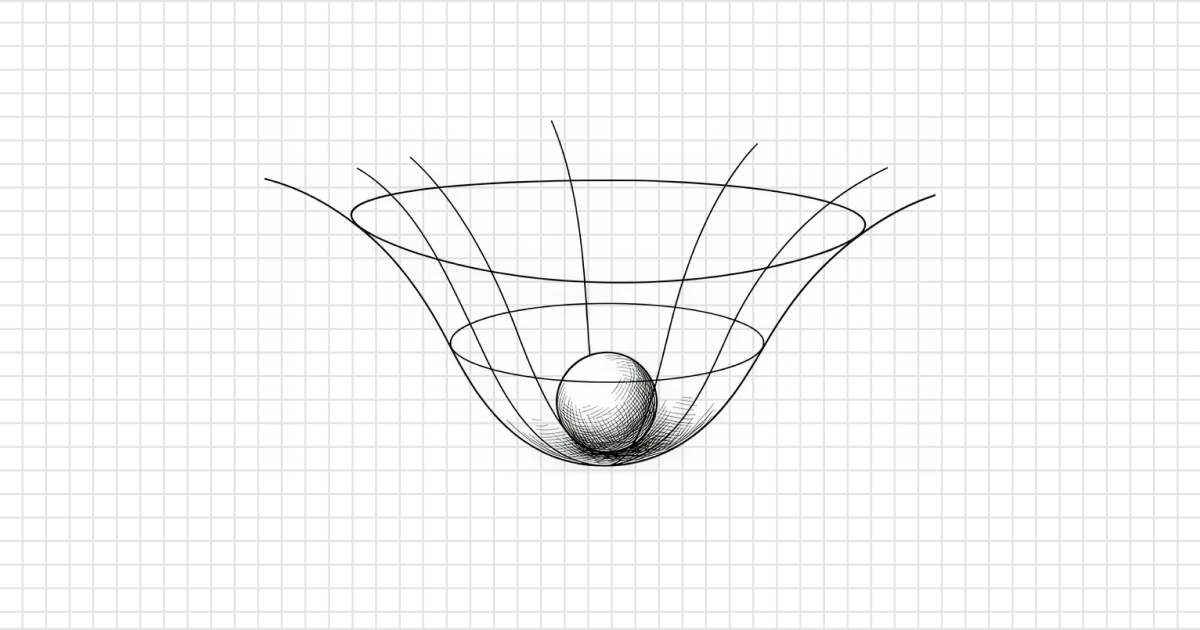

Es fühlt sich an, als würden wir alle verschiedene Teile des Elefanten greifen. Bisher hat noch niemand das ganze Tier gesehen.

The AGI debate is starting to sound like the blind men and the elephant

byu/bully309 inFuturology

11 Kommentare

Intelligence is merely the outcome of any complex organism or technology, it’s not something that will be achieved once, we will start to see intelligence in all sorts of different models soon.

The funny thing is that every generation of AI research thinks it has finally found the “real” path to intelligence. Neural nets, symbolic AI, expert systems… now LLMs vs world models vs EBMs. In hindsight it’s usually a mix of ideas that ends up working.

When the expert systems and prior AI bubbles popped it looked similar to today. Experts tried world models, others said it was impossible without embodiment. My favorite answer given is that,

„Intelligence can be usefully discussed only in terms of domains of thought and action. From this I derive the conclusion that it cannot be useful, to say the least, to base serious work on notions of ‚how much‘ intelligence will be given to a computer. Debates based on such ideas, e.g. ‚Will computers ever exceed man in intelligence?‘, are doomed to sterility.“

Computer Power and Human Reason (1976) pg 223

https://en.wikipedia.org/wiki/Computer_Power_and_Human_Reason

Dunno why people debate about an ending nobody can predict.

Maybe AGI is not a super LLM and maybe it will be?

Maybe humanity will reach AGI via different ways, LLMs (or not) and some other ways?

And maybe we will capped LLM at 200IQ equivalent and it will already be a very great achievement?

I work in tech and SOTA models like GPT 5.4 is already AGI for 90% of white-collar work. The only confusion lies with understanding how to use agents: people send it a complex problem and expect it to „1-shot solve it like a perfect computer“. It’s not a computer. Agents work like humans; try bringing in your best engineer at 8AM and give him a whiteboard with a problem and go „you have 30 sec to solve this“. Of course he will fail. Now give him 2-3 hours and a calculator, a stack of reference books, and a computer, and he will solve it brilliantly. All intelligence needs to leverage tools & references to solve problems.

I’m convinced that development on new models could stop here and we’d spend the next 20 years simply understanding how to use & hook up agents with this model into existing workplaces/society. Even in this scenario you’ll be looking at companies slashing their workforce by 50% or more.

Now consider the fact that the progress of model improvements are not stopping. We’re in for a crazy ride. I really believe that the diffusion into the greater economy is the only thing that will hold it back. Example: you’ll still find fax being used in some countries & banking not because there isn’t more modern solutions, but because the people in charge of that work opted to keep it that way.

I keep telling my friends & family that the next few years are going to be insane. Like, we will cure cancer & diseases, but unemployment will start rising and never stop. It’s going to be amazing and really bad in so many ways.

what is the concern here and does AGI matter or is a product that can „search for solutions and satisfy constraints“ sufficient enought to cause major disruptions?

in general, what percent of the workforce – and i’m talking about humans here – truly „generate“ new and novel ideas versus how many take requirements (constraints) and fulfill orders?

from a waitress at a restaurant to a structural engineer…and *everything* in between – is AGI truly needed or would something that can take input (what you want for dinner or a design of a building) and provide output (upsell sides and specials or produce technical specs in alignment with local and national codes) be enough?

while there might be different definitions to what AGI actually means – each with their own reality on achieving and implementing whatever that definition is – i’d say artificial intelligence as it *exists today* is already enough to be a major disruptor to the workforce and thus the current human experience (given employment is the driver of basic human needs of shelter and food).

My AGI contribution to the debate:

>*”Make something that does something. Period!”*

On a serious note, a lot of context can be gleaned from history of previous inventions and innovations be they:

* Tools

* Machines

* Theorems

AI is fundamentally theorems operating as machines which wield tools. That is why it will be so powerful a technology.

It does need both gentle and wise guidance from humans, however. Worry less about the shape of the Elephant and more the quality of idiots poking it!

This is how everything works, even physics. Different people have different ideas and they try to prove their ideas work and we take the ideas that show promise and work with them.

Nobody knows the absolute right way, and there may not even be one, we just keep running with whatever approaches are producing practical value.

I love how AI people think this is their problem to solve. I appreciate the advances in LLMs and I certainly would love to see this extended to other domains, but ultimately, this is a psychology/neuroscience problem, and sadly, the state of those fields is not that great when it comes to agreeing on a definition of intelligence, let alone an understanding of the implementation of intelligence at the level of the brain.

If you delve into the consciousness field, there are a few quasi-mechanistic approaches that do not invoke crazy things like Dehaene’s global workspace theory – there is actually some pretty decent insight regarding large populations of neurons and how they interact to produce consciousness – but there is still utter quackery by folks like Tononi and the integrated information theory which posits that consciousness is just connectivity (so your computer is conscious). You also have even more insane stuff like Penrose’s microtubule „quantum vibrations“ idea which … yea.

Point is, there is no cohesive theory of intelligence or consciousness in biology, and until that problem is solved, I don’t think we can conclusively say we’ve achieved AGI because we lack the framework to even make that determination.

That said, I think LLMs are evidence that you can solve certain problems – basically grammar extraction, generative grammar, etc. – even if you do not understand why/how you solved it. I would put conv nets in contrast to this, because I see conv nets as implementing many of the properties of visual cortex to solve visual classification problems and we have a reasonable understanding of how they actually work. But LLMs are a bit black boxish even now, and it is entirely possible we will achieve AGI without really knowing how/why. This should give us all pause because one key element of an AGI is that it has its own goals/desires, and if you have that in a machine that can wield massive resources, well … it could get interesting.

Part of the reason for that is that AGI is a buzzword adapted from a sci-fi concept and has no basis in modern day reality. Only reason we keep talking about it is because AI companies know that by dangling the shiny chrome future in front of investors, they can drive more investment.

If you want to know when we are actually close to AGI it’s actually pretty simple.

When an AI company is willing to make itself fully legally and financially responsible for the consequences of its model’s actions. Or at a minimum insurance companies are willing to shoulder this risk for a reasonable price.

Until this happens, humans will never fully be replaced by AI, no matter what Elon Musk would lead you to believe. Companies need humans in jobs because humans can be blamed for failures / illegal activities. Humans can be fired, sued, held criminally liable etc..

This isn’t true for every job of course, I’m not saying that companies aren’t going to fire as many people as they can get away with, but at the end of the day they will need some number of real people in specific positions if nothing more for accountability purposes.

For example, on paper, CEO is a great candidate to be fully replaced by AI. Pretty much their entire job description is make presentations, draft emails, and make decisions void of all emotion optimized for shareholder profit. But what will the shareholders do when their stock price tanks and „its the AI’s fault“. The human CEO they can just get rid of him and get a new one, and make everyone feel like changes are being made to alleviate the problem.