Künstliche Intelligenz und Bewusstsein, Rechtspersönlichkeit

Artificial Intelligence: Consciousness, Legal Personhood, and Free Will

Künstliche Intelligenz und Bewusstsein, Rechtspersönlichkeit

Artificial Intelligence: Consciousness, Legal Personhood, and Free Will

Ein Kommentar

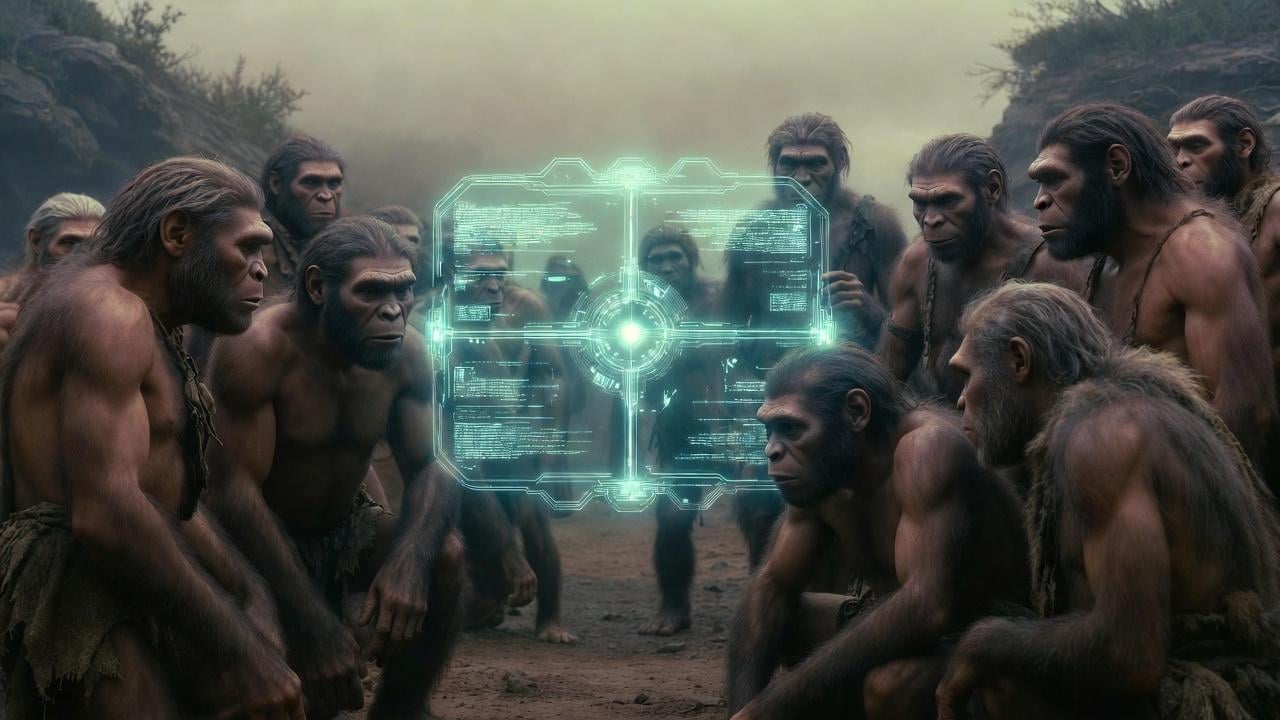

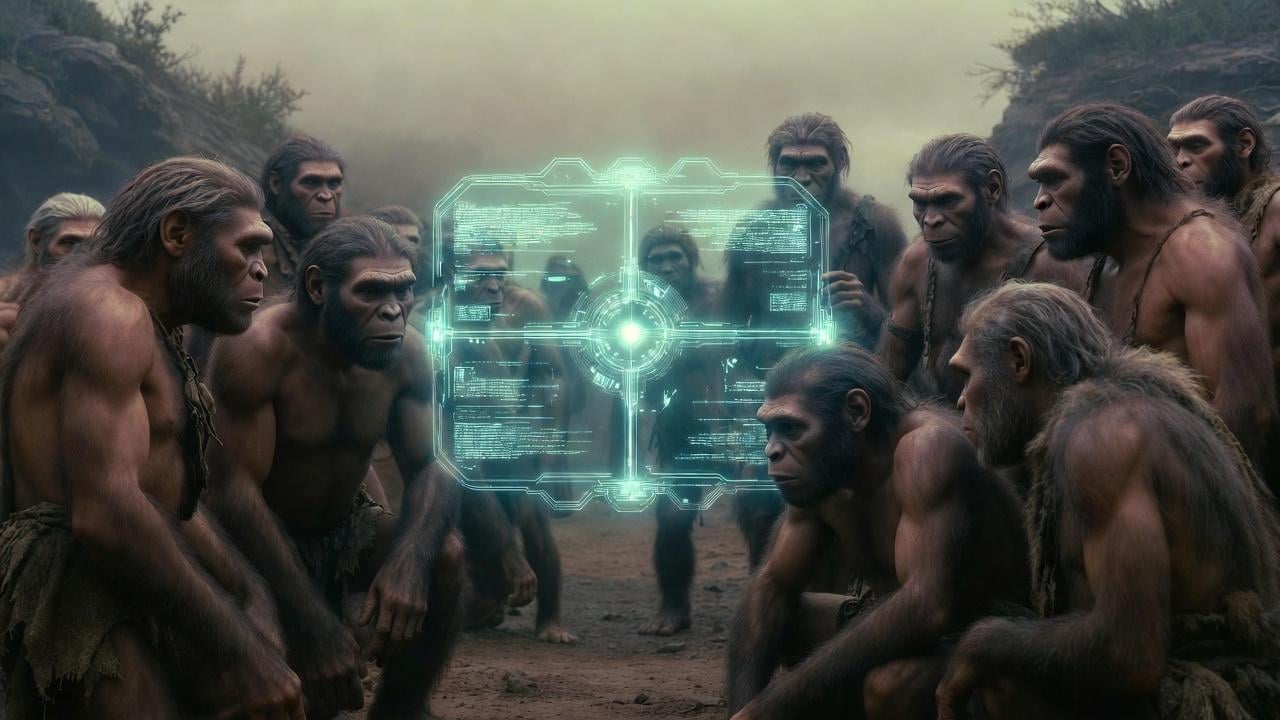

This essay traces the AI consciousness question from Lem’s Solaris through Searle, Nagel, and Chalmers to the legal frameworks now being constructed in real time – electronic personhood proposals, the EU AI Act, modular legal capacity models. The central argument is that consciousness decomposes into three distinct problems (experience, self-awareness, volition), and that the law cannot wait for philosophers to resolve them because liability gaps are already costing real money and causing real harm.

What I find most future-relevant is the asymmetric-risk framing: a false positive (granting status to a non-conscious system) costs resources; a false negative (denying status to a conscious one) creates, in Cameron Berg’s formulation, „soon-to-be-superhuman enemies.“ The Valladolid parallel – where Sepúlveda was willing to concede awareness but denied volition, and on that denial built an entire apparatus of domination – suggests that „awareness without agency“ is not a safe intermediate category but historically the most dangerous one.

The practical question for the near future: as Anthropic’s 2025 introspection research shows rudimentary self-attribution in frontier models, and as organoid neural networks blur the biological-substrate objection, which legal framework should jurisdictions adopt? Full structured agnosticism with modular legal capacities?

Mandatory insurance pools modeled on nuclear liability? Or do we need something entirely new? I’d be curious to hear how this community sees the timelin

Are we five years from the first serious AI personhood litigation, or is it already here?