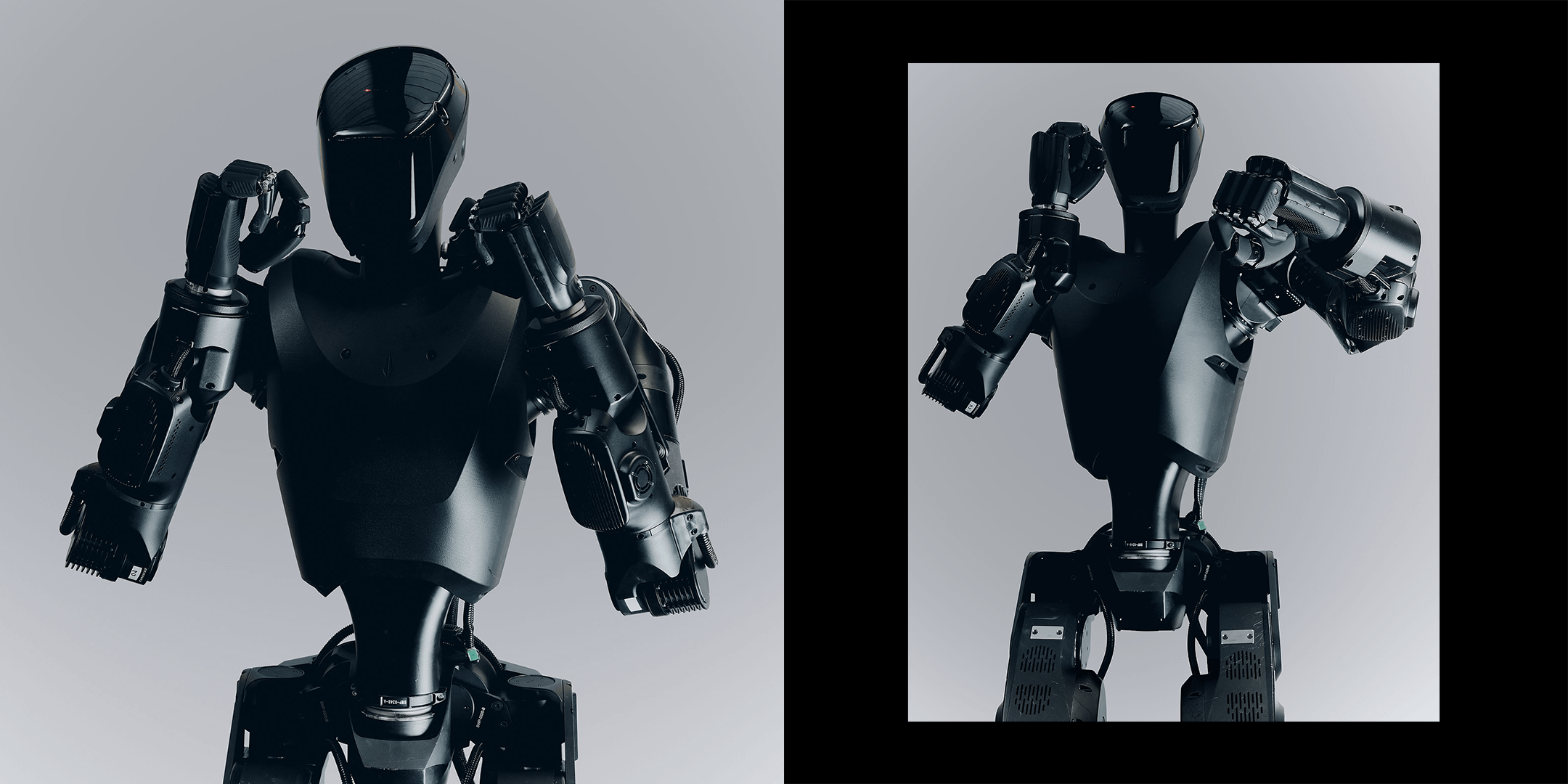

TL;DR: Technologieunternehmen wie Foundation bauen derzeit buchstäblich humanoide Terminatoren, um die menschliche Infanterie auf dem Schlachtfeld zu ersetzen. Sie haben diesen Roboter namens Phantom MK-1, den sie bereits in Ländern wie der Ukraine testen und dem Pentagon hart zur Seite stehen, um alles zu tun, vom Eintreten von Türen bis zur Grenzpatrouille. Die Start-up-Führungskräfte, die diese Maschinen verkaufen, behaupten, dass sie Leben retten und Kriegsverbrechen stoppen werden, weil Roboter keine posttraumatische Belastungsstörung bekommen und nicht müde werden. Aber Kritiker geraten zu Recht in Panik, weil wir die Todeskette einer KI-Software überlassen, die immer noch grundlegende Fakten halluziniert. Wir sprechen von schwer bewaffneten Maschinen ohne jeglichen moralischen Kompass, die tödliche Entscheidungen treffen und sich dabei bewusst den internationalen Gesetzen und jeder echten menschlichen Verantwortung entziehen.

Meine Meinung: Für die Großmächte wird der Krieg zwischen den USA und dem Iran der letzte große Krieg sein, in dem menschliche Soldaten dominieren. Wir haben den Punkt, an dem es kein Zurück mehr gibt, endgültig überschritten. Jetzt werden China, die USA, Russland, europäische Länder, Japan, Israel und andere große und/oder entwickelte Länder überwiegend Robotersoldaten einsetzen. Es besteht keine Chance, dass diese Regierungen ihre Bürger wieder in den Schlamm verbluten lassen, wenn sie Verbrauchsmaschinen in Massenproduktion herstellen können, die nicht zögern und nicht in Leichensäcken nach Hause kommen. Jede Nation, die sich weigert, sich an die vollautomatische Kriegsführung anzupassen, wird von denen, die sich dafür einsetzen, einfach von der Landkarte getilgt. Die Ära der menschlichen Infanterie ist endgültig vorbei und jeder, der etwas anderes argumentiert, lebt in reiner Wahnvorstellung.

https://time.com/article/2026/03/09/ai-robots-soldiers-war/

7 Kommentare

This article matters because it is not really about some far-off sci-fi fantasy. It is about a real company, Foundation, building a humanoid military robot called Phantom MK-1, and TIME reports that the firm already has $24 million in U.S. Army, Navy and Air Force research contracts. According to the article, two Phantom units were sent to Ukraine in February for initial frontline reconnaissance support, the company is preparing Marine Corps breaching tests, and its co-founder says it is also in close contact with DHS about possible border patrol roles. That alone should end the lazy assumption that “robot soldiers” are still just movie material.

What makes this bigger is that the article places Phantom inside a battlefield trend that is already happening now, not someday. TIME describes Ukraine as a testing ground for automating parts of the kill chain, reports that the country launches up to 9,000 drones per day, and notes that AI-enhanced systems can keep attacking when communications fail. At the same time, the Pentagon’s own public guidance still says commanders and operators must exercise appropriate levels of human judgment over the use of force, while the U.N. secretary-general has called weapons that can take lives without human control politically unacceptable and morally repugnant. So the real issue is no longer whether autonomy is coming to warfare. It is how much of the decision chain states will hand over, how fast they will do it and who gets blamed when the machine gets it wrong.

My own view is that this is the point of no return for major powers. I do not think countries such as the U.S., China, Russia, European states, Japan and Israel will keep relying primarily on human infantry once autonomous and semi-autonomous systems become cheaper, more scalable and politically easier to deploy. The article itself raises the darkest part of that future: robots can be hacked, spoofed, misread chaotic situations, and lower the political cost of war by removing flag-draped coffins from public view. Machines are not moral agents, and they do not carry legal responsibility. Humans still do. That is exactly why this transition is so dangerous. The era of human-dominant warfare is not ending because it is ethically superior. It is ending because states that believe their rivals are automating faster will feel forced to follow, whether the world is morally ready or not.

wouldn’t surprise me if China already had warehouses full of these (probably much superior models) waiting to go. I would bet money they have thousands of them assembled and ready to deploy. to be front line assault for the human soldiers to come later.

Two thoughts on this matter:

1) There is no way these machines are intelligent enough at the moment to fight autonomously. So at best we have a company that is doing some early and limited field testing. I know that Ukraine has already deployed semi-automatic killing machines, that hold the line in very dire circumstances.

2) The hypocrisy is real. Ukraine is fighting for its survival. The west is barely doing enough to keep Ukraine in the fight. They are looking for any tech that could help them. If the west was somewhat serious in preventing a development towards autonomous killing machines, we would not be writing articles but sending more conventional weapons and money, and refrain from starting pointless new wars that push poorer countries towards thinking about such machines.

But voters won’t allow them to be used for law enforcement on U.S. soil… We’ll have to think up some way to put a human face on it… Some kind of robo cop…

„prevent genocides“

Im pretty sure this is just a genocide machine actually. One deranged ruler can have the power to pull off an order 66 and slaughter who ever they desire.

They can’t make a proper un-manned turret for tanks but they will replace infantry with droids. I don’t think so

I mean we are already at the technology point where we can have swarms of cheap AI kamikaze drones be released from a fleet of drone subs off a coast and target critical infrastructure to decimate cities. Compared to that WMD level weapon this isn’t nearly as bad. There has already been efforts to get large scale AI drone swarms labeled as WMDs.

On a more positive note, American Long Range Anti Ship missiles which can be fired from outside visual range at a radar signature, leverage AI to analyze the ship to make sure it is an enemy ship. This way if a civilian ship is in the wrong place at the wrong time, it won’t kill a bunch of innocent people. Just saying, AI can be used to reduce civilian causalties that happen due to human error.

Fully removing the human from the loop/kill chain is when we run into major issues. Even if it leads to less civilians causalties than human use, it opens a pandoras box of accountability, oversight and morality. Sadly, just like every pandoras box involving weaponry, it will be opened by someone and likely soon. Let’s just hope it will be used as a deterance and treated as a WMD by the super powers.